Investigation exposes child safety risks online

A major investigation by The Guardian revealed how criminals have used Facebook and Instagram to facilitate the sexual exploitation and trafficking of minors. The reporting ultimately became part of a legal case that resulted in Meta being ordered to pay $375 million in civil penalties in March 2026 for violating child protection laws in New Mexico.

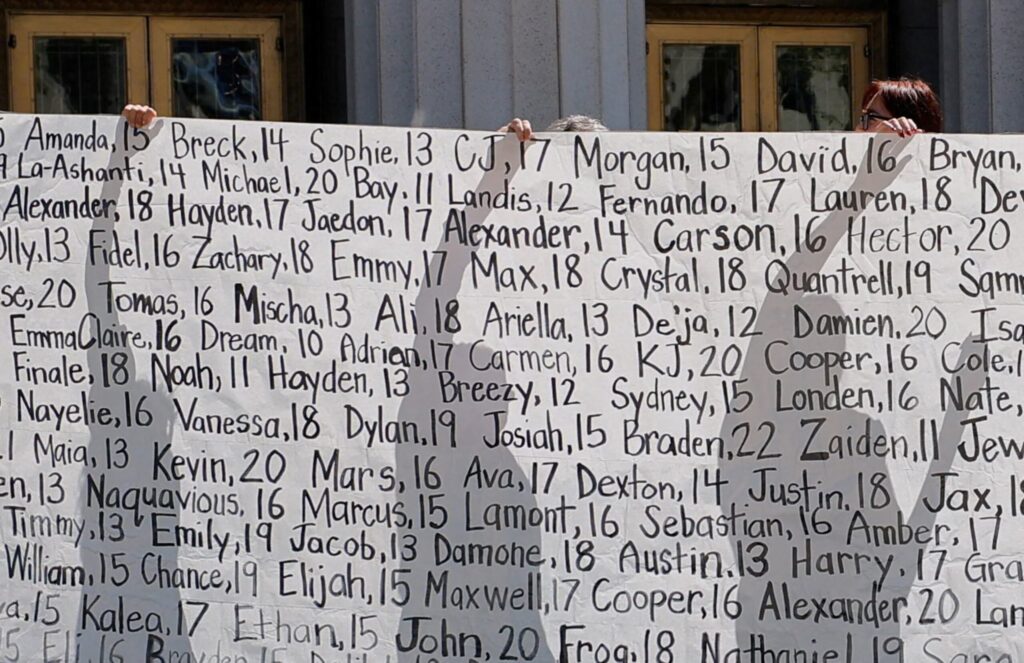

The investigation began in 2021 after a trusted source informed journalists that online child exploitation cases were rising sharply during the Covid-19 pandemic. As more people spent time online, predators increasingly used social media platforms to contact, groom and exploit vulnerable young people. Journalists worked alongside human rights experts and law enforcement officials who described a growing trend of traffickers using private online spaces, such as Facebook Messenger and private Instagram accounts, to arrange illegal transactions involving minors.

To gather evidence, reporters reviewed public legal records and examined criminal cases involving online trafficking. Court documents included transcripts of conversations in which traffickers negotiated the sale of minors using social media messaging tools. Images used to advertise victims were sometimes shared through Instagram Stories. According to investigators, much of this activity was not detected or flagged by Meta’s systems.

Interviews with former content moderators revealed that many felt overwhelmed by the disturbing material they were required to review. Several said their attempts to escalate cases involving suspected child exploitation often did not result in action being taken. Some believed company policies for reporting serious crimes were too restrictive.

Journalists also spoke with anti-trafficking organisations supporting survivors. Experts explained how traffickers often identify vulnerable teenagers through their online activity, then manipulate them emotionally before exploiting them commercially. Law enforcement officials reported that cases of child trafficking connected to social media had increased significantly in recent years, partly due to the greater amount of time young people spend online.

The investigation, published in April 2023, highlighted concerns that social media platforms were being misused as tools to facilitate exploitation. Although technology companies in the United States are often protected from liability under Section 230 — a law shielding platforms from responsibility for user-generated content — the reporting drew significant legal attention.

New Mexico’s attorney general later filed a lawsuit alleging that Meta had allowed its platforms to become spaces where predators could locate and exploit minors. The Guardian’s investigation was cited in court filings supporting the case. In March 2026, a jury ruled against Meta, ordering the company to pay financial penalties. Meta has stated that it plans to appeal and maintains that it continues to invest in safety measures for young users.

Further reporting has suggested ongoing challenges in preventing online exploitation. Critics have also raised concerns about encrypted messaging features, which increase privacy but may limit the ability of companies and authorities to detect harmful activity. During the trial, testimony suggested that relying only on users to report abuse may be less effective than proactive detection systems.

Legal scrutiny of social media companies is expected to continue. Several additional lawsuits filed by state attorneys general claim that certain platform features may negatively affect young users’ wellbeing or expose them to harmful content. Meta has said it will defend itself in court and emphasizes its commitment to improving safety tools for teenagers.